Filter bubbles, algorithms and information pluralism in the digital age

Digital information has offered citizens new ways to access and consume news. However, the use of these new information channels has raised a significant issue: that of so-called filter bubbles, genuine filters of digital communication that affect informational pluralism.

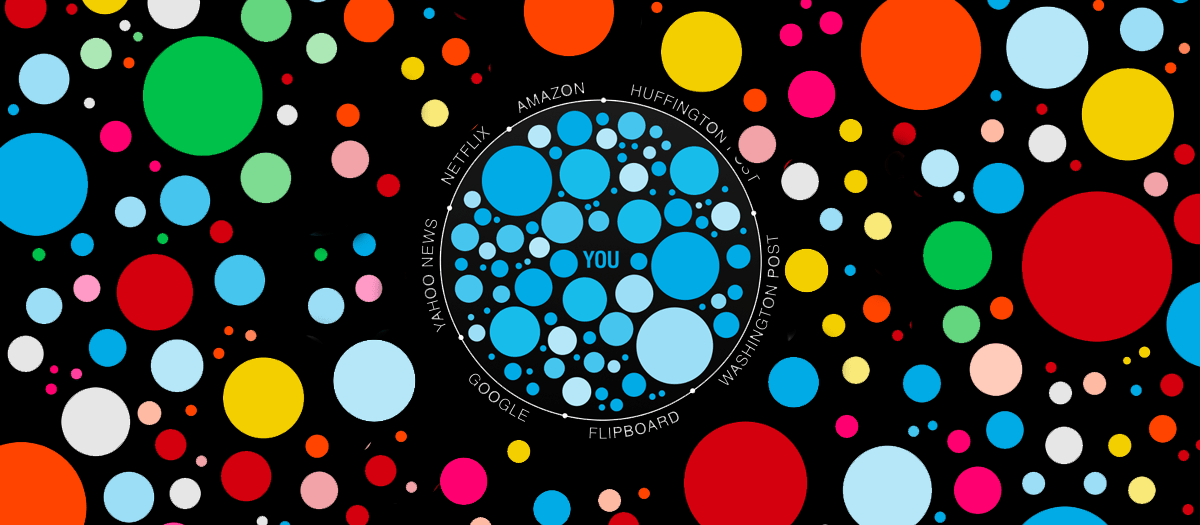

The term, coined by Eli Pariser, refers to the situation in which a web user receives only information and opinions consistent with their own beliefs. Through algorithms, filter bubbles personalize the online experience, creating – as the Treccani Encyclopedia notes – a virtual environment that is scarcely permeable to novelty and highly self-referential.

The dynamic is similar to what is generally observed on the web, where the most clicked contents tend to be repeatedly shown. A common example is e-commerce: after viewing a product, such as a pair of shoes or a technological device, one often finds it again among subsequent suggestions.

While in these cases the logic remains purely commercial, in the field of information personalization can have more significant effects, limiting the diversity of news to which users are exposed. The risk becomes particularly relevant in modern democracies, where citizens, through voting, determine the political direction of the country.

By reducing access to differing viewpoints, filter bubbles hinder the debate and dialectic that are essential to political and social life. In this way, users become less inclined to engage with alternative content, developing a partial understanding of reality. The absence of transparency mechanisms therefore risks influencing democratic processes – especially in a context like Italy, where, according to Censis data, the use of social networks as the main source of information has increased since the start of the pandemic.

Faced with this challenge, the response cannot be only technological but must also be regulatory. At the European level, the Digital Services Act (Regulation EU 2022/2065) imposes transparency obligations on recommendation systems, access to data for authorities and researchers, and greater human oversight over automated decisions. The Regulation identifies systemic risks including negative effects on democratic processes and civil discourse. In particular, Article 38 requires large platforms – such as TikTok, Instagram, or Facebook – to offer at least one recommendation option not based on profiling, while Articles 34 and 35 oblige them to assess the impact of their algorithms on disinformation and pluralism.

On the Italian side, the Constitutional Court, in judgment no. 44 of 2025, clarified that pluralism consists in the right of every citizen to access a multiplicity of information sources, as guaranteed by Article 21 of the Constitution, Article 11 of the EU Charter of Fundamental Rights, and Article 10 of the ECHR, so as to form a free and informed opinion necessary for the exercise of political rights.

However, the issue has been chiefly addressed through case law on the protection of freedom of expression against “censorial algorithms.” A telling example is the case decided by the Rome Court: the interim order of 12 December 2019 (RG no. 59264/2019) ordered Facebook to reactivate the CasaPound page, citing the constitutional principles of pluralism and freedom of expression (Article 21 Const.). The subsequent judgment on the merits no. 17909/2022 overturned that stance, revoking the interim order and legitimizing the private moderation power of platforms on the basis of Community standards and EU law.

In light of the phenomenon described, this focus provides key bibliographic, normative, and jurisprudential references to explore an issue which, in the digital information era, seems destined to maintain – and perhaps increase – its relevance over time.

(Focus by Bruno Pitingolo)

Selected bibliography:

«Bolla di filtraggio. Neologismi (2026)», Treccani: https://www.treccani.it/vocabolario/neo-bolla-di-filtraggio_(Neologismi)/

C. Denaro, “L’impatto delle bolle di filtraggio sui cittadini UE: bias e camere dell’eco alimentano la misinformazione”, in Lextech Hub, 7 novembre 2024.

D. Palano, “Bubble Democracy. La fine del pubblico e la nuova polarizzazione”, Morcelliana, Brescia, 2020.

E. Longo, “Dai big data alle «bolle filtro»: nuovi rischi per i sistemi democratici”, in Percorsi costituzionali, 1/2019, pp. 29-44.

E. Pariser, “The filter bubble: what the internet is hiding from you”, Penguin, Londra, 2012.

F. Sammito, G. Sichera, “L’informazione (e la disinformazione) nell’era di Internet: un problema di libertà problem of freedom”, in Costituzionalismo.it, 1/2021, pp. 77-131.

G. Figà Talamanca, S. Arfini “Through the Newsfeed Glass: Rethinking Filter Bubbles and Echo Chambers”, in Philosophy & Technology (2022) 35: 20, pp. 1-34.

G.E. Vigevani, O. Pollicino, C. Melzi D’Eril, M. Cuniberti, M. Bassini, “Diritto dell’informazione e dei media”, Giappichelli, Torino, 2022.

J. Möller, “Filter bubbles and digital echo chambers”, in H. Tumber, & S. Waisbord (Eds.), “The Routledge Companion to Media Disinformation and Populism”, 2021, pp. 92-100.

M. Bianca, “La filter bubble e il problema dell’identità digitale”, in MediaLaws, 2/2019, pp. 1-15.

M. Paolanti, “Nulla salus extra bollam: il principio del pluralismo informativo nell’epoca delle echo chambers”, in La Nuova Giuridica, 2023/1, pp. 121-130.

R. Riordan, “A Case Study of Judicial-Legislative Interactions via the Lens of the DSA’s Host Liability Rules”, in European Papers, Vol. 10, No. 1, 2025, pp. 259-291.

Related case law:

Tribunale di Roma, ord. 12 dicembre 2019.

Tribunale di Roma, sentenza n. 17909/2022.

Corte cost. n. 44/2025.

Tribunale dell’Unione europea (Settima sezione), sentenza del 19 novembre 2025, causa T-367/23, Amazon EU Sàrl / Commissione europea.

Selected legislation:

Regolamento (UE) 2022/2065, Digital Services Act.

DIRETTIVA (UE) 2018/1808 sui Servizi di Media Audiovisivi.

Soft law:

Raccomandazione CM/Rec(2018)1 del Comitato dei Ministri su «media pluralism and transparency of media ownership».

Other useful documentation:

17° Rapporto Censis sulla comunicazione: «I media dopo la pandemia»: https://www.compubblica.it/it/17%C2%B0-rapporto-censis-sulla-comunicazione-i-media-dopo-la-pandemia.

Rapporto AGCOM «L’informazione al tempo degli algoritmi. Il Rapporto AGCOM sul consumo di informazione», in Confindustria: https://www.confindustriaradiotv.it/linformazione-al-tempo-degli-algoritmi-il-rapporto-agcom-sul-consumo-di-informazione/.

Studio del Consiglio d’Europa su «algorithmic transparency and accountability of digital services»: https://rm.coe.int/iris-special-2023-02en/1680aeda48.